more

Featured

The AI Native Dev - from Copilot today to AI Native Software Development tomorrow

Tessl

On the Bus with Troy Vollhoffer

Country Thunder | Pod People

Sourcery

Sourcery with Molly O'Shea

The Coral Capital Podcast

Coral Capital

All About Change

Jay Ruderman

History Shorts

History Shorts

Unsubscribe Podcast

UnsubscribePodcast

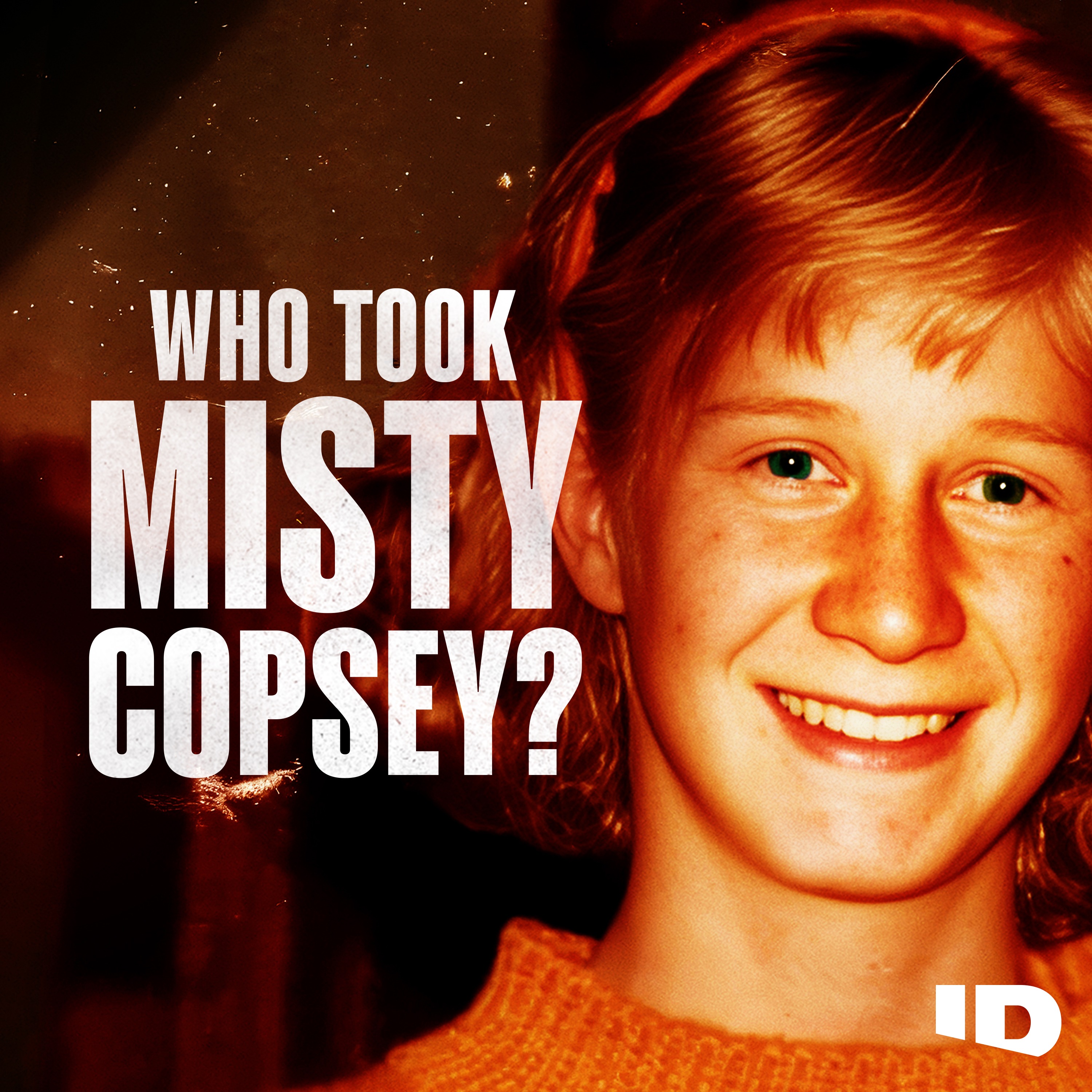

Crime, Conspiracy, Cults and Murder

Kallmekris | QCODE

more